It’s the third instalment of our ‘Buzzwords Demystified’ series – we’ve already looked at the Internet of Things (IoT) and Artificial Intelligence (AI), and now it’s the turn of Big Data.

Touch on anything technical, and you’ll be assaulted by a blizzard of acronyms and abbreviations and buzzwords. They’re aimed at condensing complex or abstract terms into shorthand. But in their brevity lies danger. Detail is lost and a hole is created – one often plugged with people’s own interpretations that stray far from original meaning. More than this, buzzwords can be a sign of mass hysteria. That the crowd has become whipped up for the worst reason – everyone else is. So, the right moment to re-examine a buzzword’s promise is when you’ve noticed it’s spilled out of tech circles and into everyday conversation with family, friends and colleagues. To confirm whether the optimism rests on firm foundations.

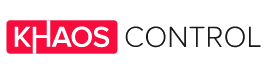

Big Data, as Google Trends confirms, has snatched the public’s attention, coming from almost nowhere in 2010 to claim high, and occasionally peak interest, since 2015.

What does Big Data mean?

The amount of data produced by society is vast. Every day an estimated 2.5 exabytes of data are generated, meaning on average 357 megabytes per person on the planet. Or half a CD-ROM. What’s an exabyte? A billion gigabytes. And our ability to store data is doubling roughly every 40 months, a trend whose sustainability looks guaranteed by the IoT bringing more devices online. That’s the ‘big’ in Big Data.

But it’s useless if we’re unable to do anything with it, in other words, to interpret it. Fortunately, the processing power of the average computer, and the sophistication of interpretative algorithms run on them, has grown consistently for decades. Hardware and software have kept up with the pile of information they’ve produced. Which means we’re able, in reasonable time, to comb through data sufficiently abundant to pick out meaningful trends, or patterns, that confirm or invalidate hypotheses.

What data and what patterns?

All you need is enough data and a rationale for processing it. Thousands upon thousands of weather stations globally log metrics ranging from temperature to wind speed to humidity, churning out vast quantities of information that feed into climate models. 600 million collisions between particles occur at the Large Hadron Collider (LHC) every second, which are captured to help unravel the universe’s fundamental laws. And 23andMe users, that have sent off saliva samples for DNA analysis, have their genetic data stored in one database. There’s 1,000,000 such users worldwide, which has allowed conditions, diseases and traits to be linked to specific genes.

The Big Data philosophy, searching for meaning through oceans of information, extends to the ecommerce world. Retailers scrutinise shopping habits – what’s bought, by whom and when – helping anticipate what they’ll buy next. Products can then be discounted or simply suggested, down to the individual, boosting conversion rates. Away from virtual shop floors and in the warehouse, data captured, from purchase order arrival to delivery despatch, reveals worker productivity and wider inefficiencies in process that hold businesses back.

What tangible return on investment (ROI) does Big Data offer?

A report commissioned by company Teradata, that surveyed over 300 senior IT and decision makers for companies whose average annual revenue grossed around £400 million, found two thirds pointed to solid returns from their Big Data investments. The remaining third were still awaiting a return. For those who received some payoff, the majority claimed it lay in the region of one to three per cent. For multimillion pound firms, that’s no slight change, contributing gains larger than some listed companies’ turnover.

Nevertheless, 56% of survey participants registered difficulty articulating a clear business case for Big Data. The implication being that for any organisation thinking of collecting and interpreting reams of data, with all the I.T. infrastructure that implies, end goals are clear. That where Big Data can add value, and by how much, is precise. Perhaps that’s part of the reason why, when married to the report’s previous stat, one third were still awaiting a return – it had been implemented but what success, or what the original problem(s) looked like, was unknown. Certainly, there must also be some who did see Big Data reap benefits, but were in the curious position of being surprised it did, or not entirely sure how it did, given their difficulty accounting for why it was needed in the first place.

There are other grounds for caution

Big Data’s usefulness relies on the models used to make sense of it. If they weigh values incorrectly, or make other assumptions that prove baseless, they won’t yield anything intelligible. A case in point is Google Flu Trends, which aggregated search, and Centres for Disease Control and Prevention, data to predict outbreaks of the virus in combination with models of its spread. Despite initial success, Google Flu Trends became wildly inaccurate, consistently overestimating flu cases by as much as 100%. Why was this? Google’s techies believe they were capturing people searching for symptoms of similar, but unrelated, illnesses. And that when flu was mentioned in the news, searches spiked. Their model couldn’t compensate.

Then there’s reading too much into the data, suffering from common statistical pitfalls, that lead to poor conclusions. That correlation does not equal causation is one of them. Global mean temperatures have steadily risen as the number of pirates has fallen. But should we fight climate change by promoting piracy? The human mind is fabulously gifted connecting unrelated variables, reading meaning where none exists. And unfortunately, it’s not always easy isolating them so it’s obvious one thing is affecting another. Similarly, we often attach too much importance to the first piece of information we receive in what’s called the anchoring effect.

Big Data can offer greater understanding of the world

Which can mean greater efficiency and profit. But it’s no panacea, nor is it revolutionary. There’s way to know if it’ll yield useful information for you in advance – no causal links may be found. Or you may think they exist, and are important, when they’re not. So, that high level of interest in ‘Big Data’ on Google Trends? It’s probably just hype. But that’ll subside as AI and machine learning processes more data in shorter time, and arrives at better conclusions.